Outrank Your Competitors. Get Found Online.

SEO is an umbrella term for increasing a website’s visibility in search engine results pages, encompassing both paid and organic activities.

At its core, SEO focuses on expanding a company’s visibility in the organic search results. It helps businesses rank more pages higher in SERPs (Search Engine Result Pages.)

And in turn, drive more visitors to the site, increasing chances for more conversions. It allows you to understand how consumers are searching for and finding information about your brand and your competitors online.

Search engine optimization (SEO) is the foundation for every digital marketing strategy.

Simply put, if you’re not getting found when your target audience is searching for information and solutions in search engines, you’re not going to hit your goals. And without an SEO strategy in place, you are falling behind your competitors.

SEO MARKETING

The effectiveness of your marketing is limited by your ability to be found by the right people online. Search engine optimization will ensure your content is visible.

Your prospects have questions. You have answers.

You just need to get in front of them. The search engine results pages get more competitive with every algorithm update. A solid SEO strategy is a key to ensuring you outrank your competitors. From identifying impactful keywords to building domain authority to perfecting the technical aspects of your site’s performance, we can help you get found online.

Our Uniques Differentiators

- Industry Focus & Domain Expertise

- Right-Sized, Agile: Capable to Deliver, Small to Care

- Flexible Engagement Models and Pricing

And that’s it.

The Benefits of SEO Marketing

At MapleSage we believe B2B SEO marketing & content marketing go hand in hand, SEO marketing helps you draw users to your site while content marketing keeps them there and helps convert them. If you build great content, you will organically drive all other aspects of search engine optimization: link building, keyword targeting (leveraging content marketing strategies and variability of content ).

By incorporating SEO strategies in your marketing efforts, you'll increase your website's visibility and rankings. It is a longer-term investment than other digital marketing channels.

-

Target vertical markets by leveraging content repurposing and aggregation of related content as targeted landing pages

-

Maximize traffic results through niche SEO keyword ballooning

-

Optimize with On-page SEO and Keywords

-

Increase Inbound links and PageRank

-

Drive organic link building by focusing on the pillars of link bait

-

Improve User Experience Metrics that Matter

-

Leverage social interaction through integrated sharing strategies; content that is unlocked by sharing, contest entries, etc.

-

Focus on a strategy that targets inbound user content discovery rather than outbound marketing crafted content

Selecting an SEO Agency

The result: minimal growth in ranking potential and big losses to competitors.

Why Hire an SEO Company

- Individual projects – These plans include a one-time cost to finish a task.

- Monthly retainers – This is perfect for building a long-term relationship with an SEO company.

- Performance – A rare find, performance-based plans are based on if your SEO firm achieves the results you pay for.

- Hourly – This option is based on hourly rates for the services provided by the agency.

The best B2B SEO strategies are an ongoing partnership.

-

Keyword Ranking Reports

-

Opportunities for leveraging viral content based on current trends or hot topics related to your industry

-

Ongoing work with copywriters to expand online content

-

Develop and execute strategies for implementing user-generated content—product reviews, client testimonials, user-submitted articles, etc.

-

Strategy updates based on changes in the search industry

Key Initiatives

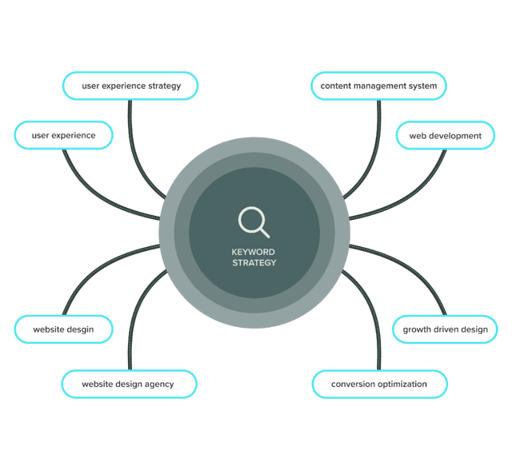

Keyword Strategy 1

Keyword strategy and research

We’ll put together a comprehensive keyword strategy centered around your personas’ pains and interests and strengthen your ability to get found for competitive terms using topic clusters.

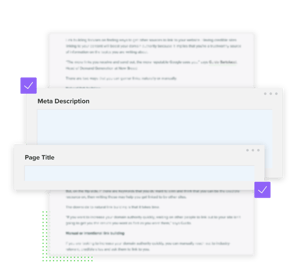

Page Optimization 2

On-page optimization

Your ability to rank in search results starts with the content on each webpage. We’ll make sure your content, headings, URLs and meta descriptions all follow best practices and that there are no technical errors holding you back.

Backlinking 3

Backlinking strategy and outreach

In addition to the work on your site, you can improve your search ranking by improving your authority off your site. We’ll identify opportunities for guest posts and backlinks and help you conduct necessary outreach.

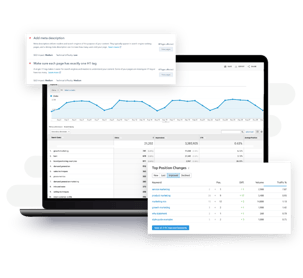

Reporting & Analytics 4

Reporting and competitive analysis

Are there gaps in your competitors’ keyword strategies that you can take advantage of? We’ll identify them in addition to providing regular recommendations for opportunities about your own search and content strategies.